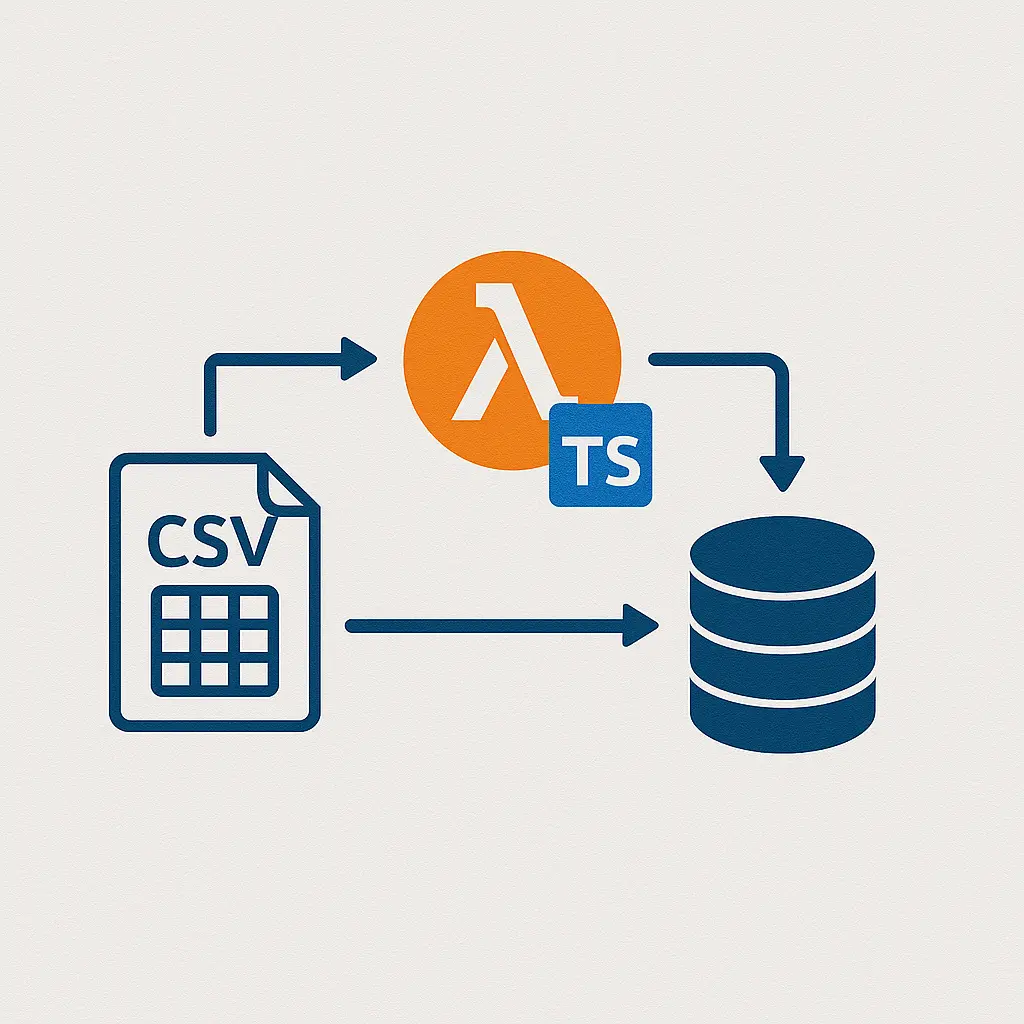

I had to load a big CSV into DynamoDB. It was for a youth soccer club site I run on weekends. Parents sent player data in spreadsheets. I needed that data in a clean table, fast. So I built a tiny Lambda in TypeScript that reads a CSV from S3 and writes rows to DynamoDB in batches. Sounds simple, right? It mostly was. But a few bumps made me sip my coffee extra slow.

Here’s my honest take, plus the exact code I used.

If you're after the expanded, tutorial-style version with extra screenshots, it's all captured in this detailed write-up.

Why I even needed this

I had player sign-ups in one messy CSV. The club wanted search by team and by email. I already had a DynamoDB table called Players. It used:

- PK: playerId (a UUID)

- SK: teamId (string)

I also had a GSI for email lookups. Nothing fancy. I just needed a clean, safe way to push rows in, with retries, and not blow my write capacity.

The setup that actually worked for me

- Trigger: S3 upload (put the CSV in a bucket, Lambda runs)

- Runtime: Node.js 20.x

- Lang: TypeScript

- Parser: csv-parse

- AWS SDK: v3 (DynamoDBDocumentClient)

- Build: esbuild (kept the bundle tiny)

- Lambda memory: 512 MB

- Timeout: 5 minutes (I later set 10 for large files)

- DynamoDB write: batch write with backoff on UnprocessedItems

If you want a deeper dive into the parser itself, the csv.js parse documentation is an excellent reference—it's where I double-checked options like bom and relax_quotes.

A concise DynamoDB batch-write primer that helped me tighten this flow is over here.

I know some folks glue stuff with Glue. I kept it simple. One Lambda, one job.

A real CSV I used (trimmed)

playerId,teamId,firstName,lastName,email,birthYear

p-1001,u10-blue,Sam,Lopez,sam.lopez@example.com,2014

p-1002,u10-blue,Ana,Ruiz,ana.ruiz@example.com,2014

p-1003,u12-red,Jai,Patel,jai.patel@example.com,2012

Note: the CSV came from Numbers on a Mac first. Then someone opened it in Excel on Windows. Then it had a BOM at the start. That tiny BOM made my first run hiccup. More on that later.

My Lambda code (TypeScript)

This reads the S3 file stream, parses CSV row by row, and writes to DynamoDB in batches of 25. It handles retries for unprocessed items. It logs a tiny summary at the end. Nothing wild.

While wiring this up, I also played with using named arguments in TypeScript constructors to keep things readable—here’s my quick take on that experiment.

// code...

Env vars I set on the function:

- TABLE_NAME = Players

Role for the function needed S3 read and DynamoDB write. Here’s the policy chunk that worked for me.

// policy...

Build tip: I used esbuild with “bundle: true” and target “node20”. I kept csv-parse in the bundle. No layer needed.

Things that made me grin

- Batch writes are fast. With 25 items per call, it felt smooth.

- The doc client saves time. No manual marshalling.

- Backoff on UnprocessedItems worked well. It kept me from slamming the table.

- Event-driven feels nice. I upload to S3, it runs. Done.

I also liked CloudWatch logs here. I watched it tick through batches while I ate a granola bar. Simple joy.

Things that bugged me (but I fixed them)

- BOM at the start of the file. The parser with bom: true fixed that.

- Commas inside quotes. Names like “Smith, Jr.” broke my first test. relax_quotes helped.

- Throttling on big runs. When WCU was low, I saw retries. Backoff handled it, but it slowed the run.

- Bad rows. Missing teamId or email? I skipped and logged them. I later wrote them to a dead letter S3 key.

- Packaging. If your bundle grows, Lambda can get chunky. esbuild kept it lean, but I had to prune dev stuff.

One more small thing. Emails with uppercase letters caused dupes in my GSI. I normalized to lower case. That saved me later.

Real numbers from my run

- File size: ~18,200 rows

- Lambda memory: 512 MB

- Timeout: 10 minutes

- Table WCU: 500

- Total time: about 3.5 minutes

- Cost: cents. Like, under a coffee.

Could it be faster? Sure. But I cared more about safe writes and clean logs than raw speed.

A few tiny helpers that paid off

- Idempotency: I used a stable playerId when it existed. If not, I made one from email + name + team. That kept reruns from making dupes.

- Conditional writes: In another run, I used a ConditionExpression to avoid overwriting live data. For this batch job, I kept it simple.

- Dead letter: I sent bad rows (JSON) to a “failed/” folder in S3. Parents do typos. It happens.

- Partial keys: I logged any row missing playerId or teamId. Later I sent those rows back to the team lead with notes.

When I’d pick this stack again

- You have CSVs from partners or teams.

- You need DynamoDB fast, but with safety.

- You like small tools over huge pipes.

- You want a one-button (or one-upload) load job.

If you need heavy transforms or joins, I’d reach for another tool. But for straight CSV to DynamoDB, this felt right.

For membership-based platforms—think private clubs, dating hubs, or swinger communities—you often have to digest sign-up spreadsheets in one gulp. One real-world example is the vibrant lifestyle network over at SLS Swingers where the team curates a large catalog of member profiles; browsing their site shows how streamlined data pipelines translate into faster matching and happier users. Likewise, regional adult classifieds depend heavily on timely data imports; exploring the listings on AdultLook's Newport News board lets you see how fresh, well-structured ads improve visibility and trust—proof that clean ingestion workflows pay tangible dividends.

Quick checklist you can steal

- S3 trigger set to the right prefix (like imports/).

- Lambda timeout high enough for your row count.

- Memory at least 512 MB for steady parse speed.

- Batch size 25, with retries and backoff.

- CSV parser set to handle BOM and quotes.

- Normalize keys (email lower case, trim spaces).

- Log summary: total rows, wrote count, skipped count.

- Write errors to S3 for follow-up.

Final take

This path—Lambda + TypeScript + csv-parse + DynamoDB—felt solid. It was calm, cheap, and honest. A few bumps, sure. But once I tuned backoff and cleaned the CSV quirks, it ran like a steady drum.

For an AWS-maintained perspective, the official blog post on ingesting CSV data to Amazon DynamoDB using AWS Lambda walks through a very similar flow and is worth skimming.

Would I use it again? Yep. I already have. Two more imports, same stack, zero fuss. You know what? Sometimes plain tools just do the job.